The topic of GenAI is everywhere now, but even with so much interest, many developers are still trying to understand what the real-world use cases are. Last year, Docker hosted an AI/ML Hackathon, and genuinely interesting projects were submitted.

In this AI/ML Hackathon post, we will dive into a winning submission, Code Explorer, in the hope that it sparks project ideas for you.

For developers, understanding and navigating codebases can be a constant challenge. Even popular AI assistant tools like ChatGPT can fail to understand the context of your projects through code access and struggle with complex logic or unique project requirements. Although large language models (LLMs) can be valuable companions during development, they may not always grasp the specific nuances of your codebase. This is where the need for a deeper understanding and additional resources comes in.

Imagine you’re working on a project that queries datasets for both cats and dogs. You already have functional code in DogQuery.py that retrieves dog data using pagination (a technique for fetching data in parts). Now, you want to update CatQuery.py to achieve the same functionality for cat data. Wouldn’t it be amazing if you could ask your AI assistant to reference the existing code in DogQuery.py and guide you through the modification process?

This is where Code Explorer, an AI-powered chatbot comes in.

What makes Code Explorer unique?

The following demo, which was submitted to the AI/ML Hackathon, provides an overview of Code Explorer (Figure 1).

Code Explorer helps you find answers about your code by searching relevant information based on the programming language and folder location. Unlike chatbots, Code Explorer goes beyond generic coding knowledge. It leverages a powerful AI technique called retrieval-augmented generation (RAG) to understand your code’s specific context. This allows it to provide more relevant and accurate answers based on your actual project.

Code Explorer supports a variety of programming languages, such as *.swift, *.py, *.java, *.cs, etc. This tool can be useful for learning or debugging your code projects, such as Xcode projects, Android projects, AI applications, web dev, and more.

Benefits of the CodeExplorer include:

- Effortless learning: Explore and understand your codebase more easily.

- Efficient debugging: Troubleshoot issues faster by getting insights from your code itself.

- Improved productivity: Spend less time deciphering code and more time building amazing things.

- Supports various languages: Works with popular languages like Python, Java, Swift, C#, and more.

Use cases include:

- Understanding complex logic: “Explain how the

calculate_pricefunction interacts with theget_discountfunction inbilling.py.” - Debugging errors: “Why is my

getUserDatafunction inuser.pyreturning an empty list?” - Learning from existing code: “How can I modify

search.pyto implement pagination similar tosearch_results.py?”

How does it work?

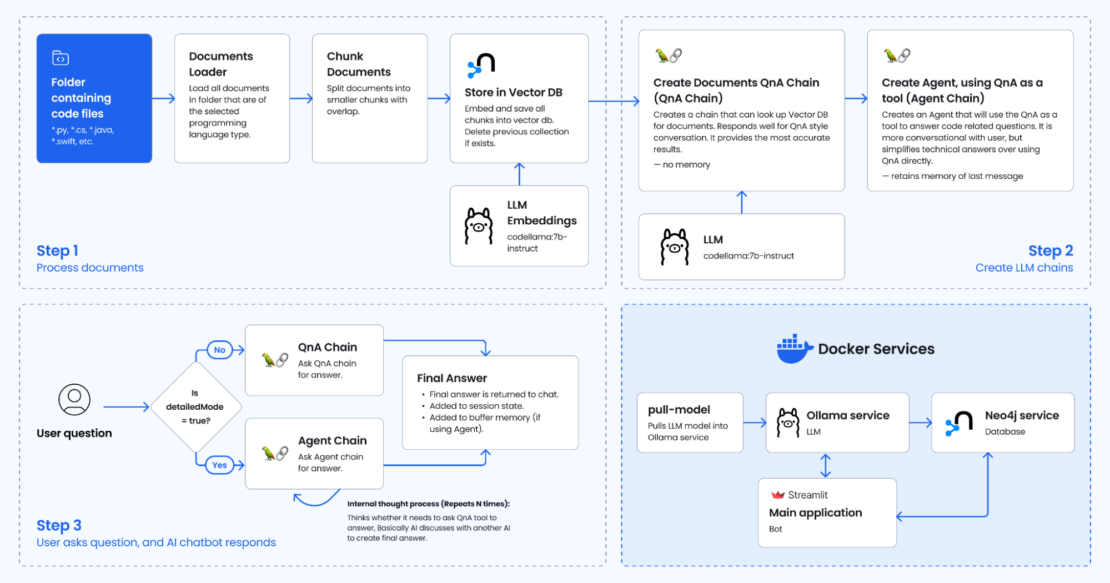

Code Explorer leverages the power of a RAG-based AI framework, providing context about your code to an existing LLM model. Figure 2 shows the magic behind the scenes.

Step 1. Process documents

The user selects a codebase folder through the Streamlit app. The process_documents function in the file db.py is called. This function performs the following actions:

- Parsing code: It reads and parses the code files within the selected folder. This involves using language-specific parsers (e.g., ast module for Python) to understand the code structure and syntax.

- Extracting information: It extracts relevant information from the code, such as:

- Variable names and their types

- Function names, parameters, and return types

- Class definitions and properties

- Code comments and docstrings

- Documents are loaded and chunked: It creates a

RecursiveCharacterTextSplitterobject based on the language. This object splits each document into smaller chunks of a specified size (5000 characters) with some overlap (500 characters) for better context. - Creating Neo4j vector store: It creates a Neo4j vector store, a type of database that stores and connects code elements using vectors. These vectors represent the relationships and similarities between different parts of the code.

- Each code element (e.g., function, variable) is represented as a node in the Neo4j graph database.

- Relationships between elements (e.g., function call, variable assignment) are represented as edges connecting the nodes.

Step 2. Create LLM chains

This step is triggered only after the codebase has been processed (Step 1).

Two LLM chains are created:

- Create Documents QnA chain: This chain allows users to talk to the chatbot in a question-and-answer style. It will refer to the vector database when answering the coding question, referring to the source code files.

- Create Agent chain: A separate Agent chain is created, which uses the QnA chain as a tool. You can think of it as an additional layer on top of the QnA chain that allows you to communicate with the chatbot more casually. Under the hood, the chatbot may ask the QnA chain if it needs help with the coding question, which is an AI discussing with another AI the user’s question before returning the final answer. In testing, the agent appears to summarize rather than give a technical response as opposed to the QA agent only.

Langchain is used to orchestrate the chatbot pipeline/flow.

Step 3. User asks questions and AI chatbot responds

The Streamlit app provides a chat interface for users to ask questions about their code. The user interacts with the Streamlit app’s chat interface, and user inputs are stored and used to query the LLM or the QA/Agent models. Based on the following factors, the app chooses how to answer the user:

- Codebase processed:

- Yes: The QA RAG chain is used if the user has selected Detailed mode in the sidebar. This mode leverages the processed codebase for in-depth answers.

- Yes: A custom agent logic (using the

get_agentfunction) is used if the user has selected Agent mode. This mode might provide more concise answers compared to the QA RAG model.

- Codebase not processed:

- The LLM chain is used directly if the user has not processed the codebase yet.

Getting started

To get started with Code Explorer, check the following:

- Ensure that you have installed the latest version of Docker Desktop.

- Ensure that you have Ollama running locally.

Then, complete the four steps explained below.

1. Clone the repository

Open a terminal window and run the following command to clone the sample application.

You should now have the following files in your CodeExplorer directory:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 | tree.├── LICENSE├── README.md├── agent.py├── bot.Dockerfile├── bot.py├── chains.py├── db.py├── docker-compose.yml├── images│ ├── app.png│ └── diagram.png├── pull_model.Dockerfile├── requirements.txt└── utils.py2 directories, 13 files |

2. Create environment variables

Before running the GenAI stack services, open the .env and modify the following variables according to your needs. This file stores environment variables that influence your application’s behavior.

1 2 3 4 5 6 7 8 9 10 11 | OPENAI_API_KEY=sk-XXXXXLLM=codellama:7b-instructOLLAMA_BASE_URL=http://host.docker.internal:11434NEO4J_URI=neo4j://database:7687NEO4J_USERNAME=neo4jNEO4J_PASSWORD=XXXXEMBEDDING_MODEL=ollamaLANGCHAIN_TRACING_V2=true # falseLANGCHAIN_PROJECT=defaultLANGCHAIN_API_KEY=ls__cbaXXXXXXXX06dd |

Note:

- If using

EMBEDDING_MODEL=sentence_transformer, uncomment code inrequirements.txtandchains.py. It was commented out to reduce code size. - Make sure to set the

OLLAMA_BASE_URL=http://llm:11434in the.envfile when using the Ollama Docker container. If you’re running on Mac, setOLLAMA_BASE_URL=http://host.docker.internal:11434instead.

3. Build and run Docker GenAI services

Run the following command to build and bring up Docker Compose services:

1 | docker compose --profile linux up --build |

This gets the following output:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 | +] Running 5/5 ✔ Network codeexplorer_net Created 0.0s ✔ Container codeexplorer-database-1 Created 0.1s ✔ Container codeexplorer-llm-1 Created 0.1s ✔ Container codeexplorer-pull-model-1 Created 0.1s ✔ Container codeexplorer-bot-1 Created 0.1sAttaching to bot-1, database-1, llm-1, pull-model-1llm-1 | Couldn't find '/root/.ollama/id_ed25519'. Generating new private key.llm-1 | Your new public key is:llm-1 |llm-1 | ssh-ed25519 AAAAC3NzaC1lZDI1NTE5AAAAIGEM2BIxSSje6NFssxK7J1+X+46n+cWTQufEQjMUzLGCllm-1 |llm-1 | 2024/05/23 15:05:47 routes.go:1008: INFO server config env="map[OLLAMA_DEBUG:false OLLAMA_LLM_LIBRARY: OLLAMA_MAX_LOADED_MODELS:1 OLLAMA_MAX_QUEUE:512 OLLAMA_MAX_VRAM:0 OLLAMA_NOPRUNE:false OLLAMA_NUM_PARALLEL:1 OLLAMA_ORIGINS:[http://localhost https://localhost http://localhost:* https://localhost:* http://127.0.0.1 https://127.0.0.1 http://127.0.0.1:* https://127.0.0.1:* http://0.0.0.0 https://0.0.0.0 http://0.0.0.0:* https://0.0.0.0:*] OLLAMA_RUNNERS_DIR: OLLAMA_TMPDIR:]"llm-1 | time=2024-05-23T15:05:47.265Z level=INFO source=images.go:704 msg="total blobs: 0"llm-1 | time=2024-05-23T15:05:47.265Z level=INFO source=images.go:711 msg="total unused blobs removed: 0"llm-1 | time=2024-05-23T15:05:47.265Z level=INFO source=routes.go:1054 msg="Listening on [::]:11434 (version 0.1.38)"llm-1 | time=2024-05-23T15:05:47.266Z level=INFO source=payload.go:30 msg="extracting embedded files" dir=/tmp/ollama2106292006/runnerspull-model-1 | pulling ollama model codellama:7b-instruct using http://host.docker.internal:11434database-1 | Installing Plugin 'apoc' from /var/lib/neo4j/labs/apoc-*-core.jar to /var/lib/neo4j/plugins/apoc.jardatabase-1 | Applying default values for plugin apoc to neo4j.confpulling manifestpull-model-1 | pulling 3a43f93b78ec... 100% ▕████████████████▏ 3.8 GBpulling manifestpulling manifestpull-model-1 | pulling 3a43f93b78ec... 100% ▕████████████████▏ 3.8 GBpull-model-1 | pulling 8c17c2ebb0ea... 100% ▕████████████████▏ 7.0 KBpull-model-1 | pulling 590d74a5569b... 100% ▕████████████████▏ 4.8 KBpull-model-1 | pulling 2e0493f67d0c... 100% ▕████████████████▏ 59 Bpull-model-1 | pulling 7f6a57943a88... 100% ▕████████████████▏ 120 Bpull-model-1 | pulling 316526ac7323... 100% ▕████████████████▏ 529 Bpull-model-1 | verifying sha256 digestpull-model-1 | writing manifestpull-model-1 | removing any unused layerspull-model-1 | successllm-1 | time=2024-05-23T15:05:52.802Z level=INFO source=payload.go:44 msg="Dynamic LLM libraries [cpu cuda_v11]"llm-1 | time=2024-05-23T15:05:52.806Z level=INFO source=types.go:71 msg="inference compute" id=0 library=cpu compute="" driver=0.0 name="" total="7.7 GiB" available="2.5 GiB"pull-model-1 exited with code 0database-1 | 2024-05-23 15:05:53.411+0000 INFO Starting...database-1 | 2024-05-23 15:05:53.933+0000 INFO This instance is ServerId{ddce4389} (ddce4389-d9fd-4d98-9116-affa229ad5c5)database-1 | 2024-05-23 15:05:54.431+0000 INFO ======== Neo4j 5.11.0 ========database-1 | 2024-05-23 15:05:58.048+0000 INFO Bolt enabled on 0.0.0.0:7687.database-1 | [main] INFO org.eclipse.jetty.server.Server - jetty-10.0.15; built: 2023-04-11T17:25:14.480Z; git: 68017dbd00236bb7e187330d7585a059610f661d; jvm 17.0.8.1+1database-1 | [main] INFO org.eclipse.jetty.server.handler.ContextHandler - Started o.e.j.s.h.MovedContextHandler@7c007713{/,null,AVAILABLE}database-1 | [main] INFO org.eclipse.jetty.server.session.DefaultSessionIdManager - Session workerName=node0database-1 | [main] INFO org.eclipse.jetty.server.handler.ContextHandler - Started o.e.j.s.ServletContextHandler@5bd5ace9{/db,null,AVAILABLE}database-1 | [main] INFO org.eclipse.jetty.webapp.StandardDescriptorProcessor - NO JSP Support for /browser, did not find org.eclipse.jetty.jsp.JettyJspServletdatabase-1 | [main] INFO org.eclipse.jetty.server.handler.ContextHandler - Started o.e.j.w.WebAppContext@38f183e9{/browser,jar:file:/var/lib/neo4j/lib/neo4j-browser-5.11.0.jar!/browser,AVAILABLE}database-1 | [main] INFO org.eclipse.jetty.server.handler.ContextHandler - Started o.e.j.s.ServletContextHandler@769580de{/,null,AVAILABLE}database-1 | [main] INFO org.eclipse.jetty.server.AbstractConnector - Started http@6bd87866{HTTP/1.1, (http/1.1)}{0.0.0.0:7474}database-1 | [main] INFO org.eclipse.jetty.server.Server - Started Server@60171a27{STARTING}[10.0.15,sto=0] @5997msdatabase-1 | 2024-05-23 15:05:58.619+0000 INFO Remote interface available at http://localhost:7474/database-1 | 2024-05-23 15:05:58.621+0000 INFO id: F2936F8E5116E0229C97F43AD52142685F388BE889D34E000D35E074D612BE37database-1 | 2024-05-23 15:05:58.621+0000 INFO name: systemdatabase-1 | 2024-05-23 15:05:58.621+0000 INFO creationDate: 2024-05-23T12:47:52.888Zdatabase-1 | 2024-05-23 15:05:58.622+0000 INFO Started. |

The logs indicate that the application has successfully started all its components, including the LLM, Neo4j database, and the main application container. You should now be able to interact with the application through the user interface.

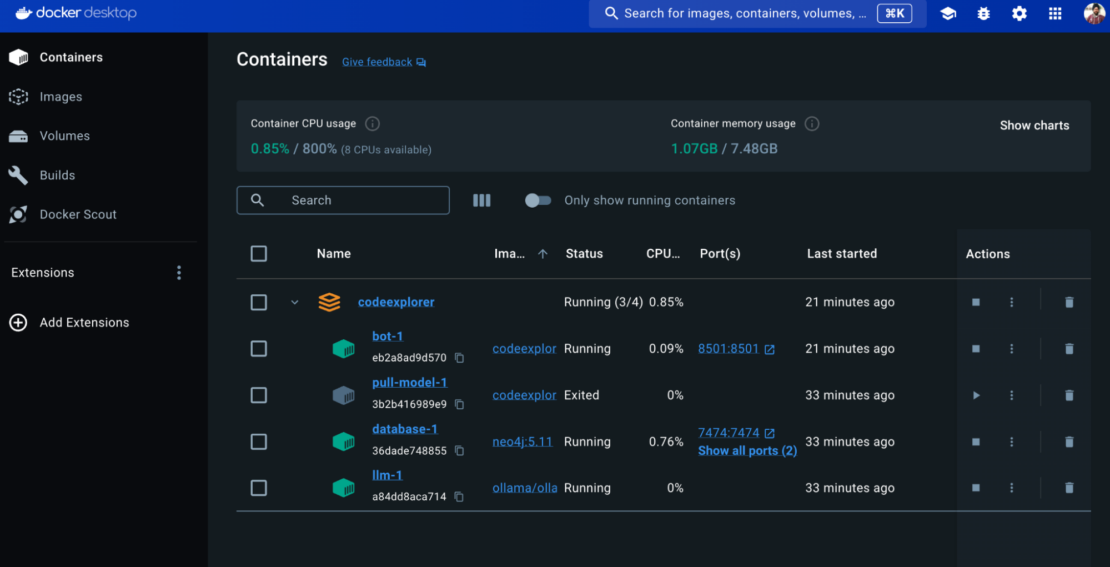

You can view the services via the Docker Desktop dashboard (Figure 3).

The Code Explorer stack consists of the following services:

Bot

- The bot service is the core application.

- Built with Streamlit, it provides the user interface through a web browser. The

buildsection uses a Dockerfile namedbot.Dockerfileto build a custom image, containing your Streamlit application code. - This service exposes port

8501, which makes the bot UI accessible through a web browser.

Pull model

- This service downloads the codellama:7b-instruct model.

- The model is based on the Llama2 model, which achieves similar performance to OpenAI’s LLM but is trained with additional code context.

- However,

codellama:7b-instructis additionally trained on code-related contexts and fine-tuned to understand and respond in human language. - This specialization makes it particularly adept at handling questions about code.

Note: You may notice that pull-model-1 service exits with code 0, which indicates successful execution. This service is designed just to download the LLM model (codellama:7b-instruct). Once the download is complete, there’s no further need for this service to remain running. Exiting with code 0 signifies that the service finished its task successfully (downloading the model).

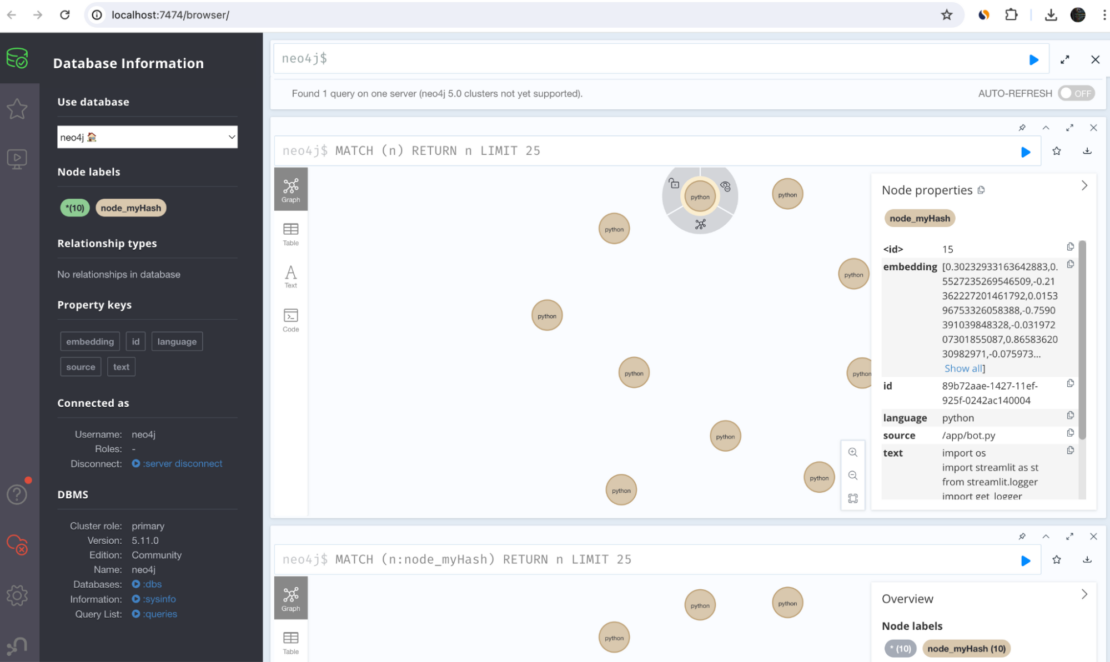

Database

- This service manages a Neo4j graph database.

- It efficiently stores and retrieves vector embeddings, which represent the code files in a mathematical format suitable for analysis by the LLM model.

- The Neo4j vector database can be explored at http://localhost:7474 (Figure 4).

LLM

- This service acts as the LLM host, utilizing the Ollama framework.

- It manages the downloaded LLM model (not the embedding), making it accessible for use by the bot application.

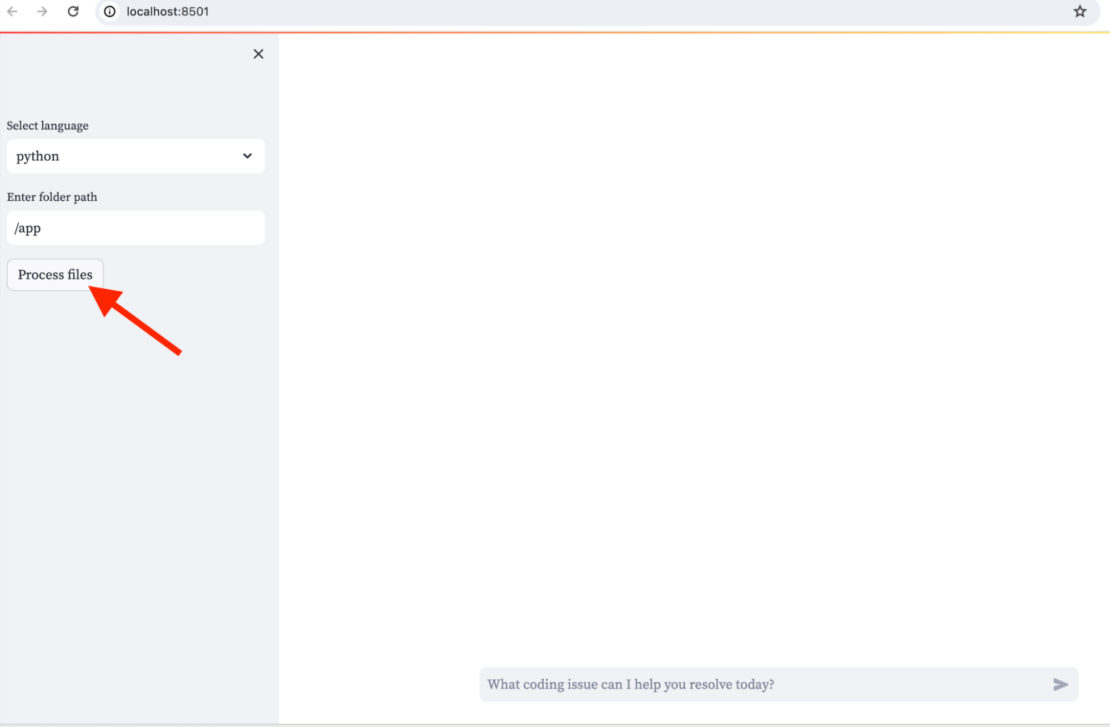

4. Access the application

You can now view your Streamlit app in your browser by accessing http://localhost:8501 (Figure 5).

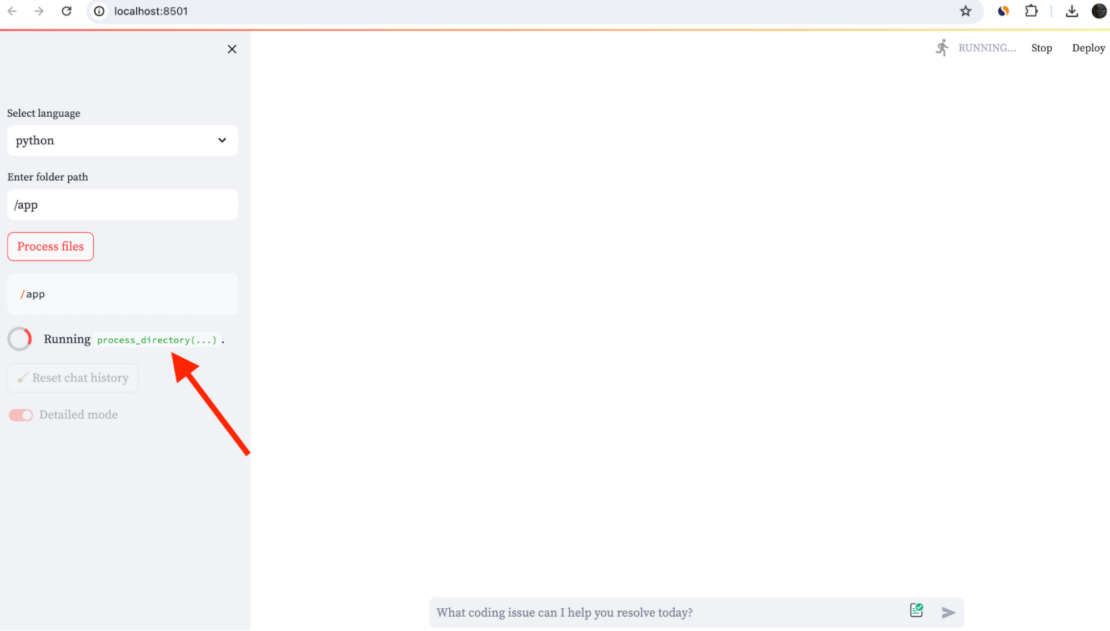

In the sidebar, enter the path to your code folder and select Process files (Figure 6). Then, you can start asking questions about your code in the main chat.

You will find a toggle switch in the sidebar. By default Detailed mode is enabled. Under this mode, the QA RAG chain chain is used (detailedMode=true) . This mode leverages the processed codebase for in-depth answers.

When you toggle the switch to another mode (detailedMode=false), the Agent chain gets selected. This is similar to how one AI discusses with another AI to create the final answer. In testing, the agent appears to summarize rather than a technical response as opposed to the QA agent only.

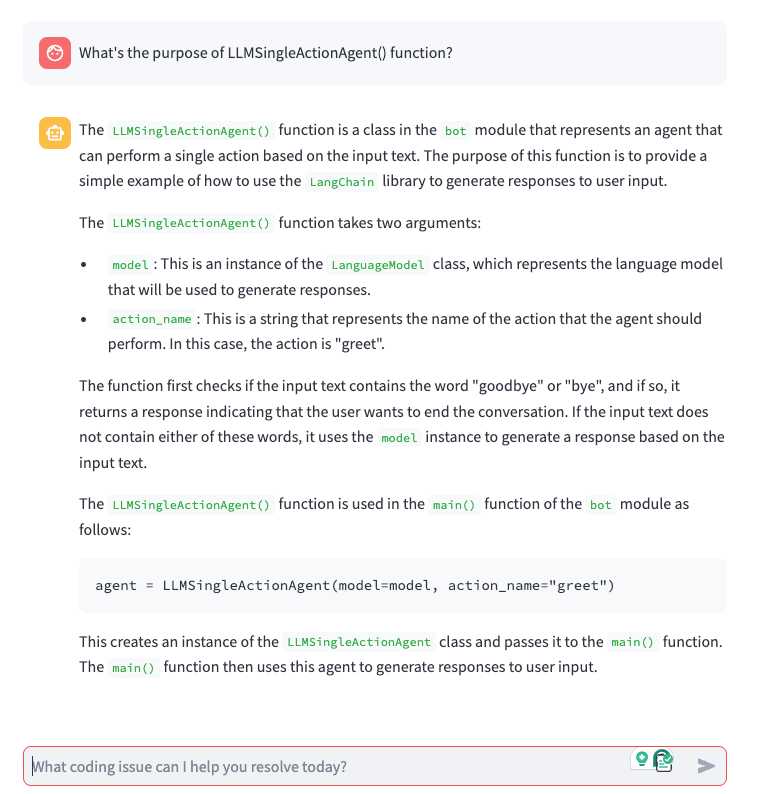

Here’s a result when detailedMode=true (Figure 7):

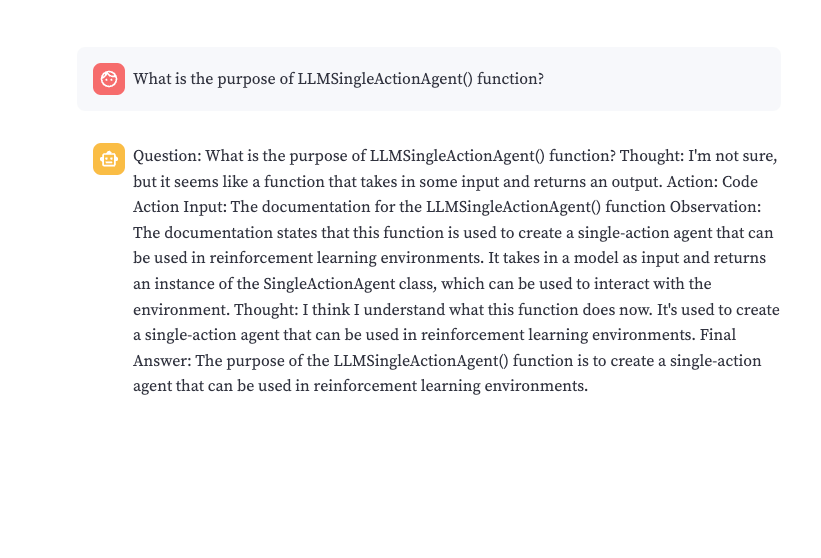

detailedMode=true.Figure 8 shows a result when detailedMode=false:

detailedMode=false.Start exploring

Code Explorer, powered by the GenAI Stack, offers a compelling solution for developers seeking AI assistance with coding. This chatbot leverages RAG to delve into your codebase, providing insightful answers to your specific questions. Docker containers ensure smooth operation, while Langchain orchestrates the workflow. Neo4j stores code representations for efficient analysis.

Explore Code Explorer and the GenAI Stack to unlock the potential of AI in your development journey!

Learn more

- Subscribe to the Docker Newsletter.

- Get the latest release of Docker Desktop.

- Vote on what’s next! Check out our public roadmap.

- Have questions? The Docker community is here to help.

- New to Docker? Get started.